This is a public statement of where my thinking sits at this moment. It is not a

manifesto. It is not a finished theory. It is a marker — so that future versions

of this work can be traced back to what I actually believed, and what I did not yet

know, when I started building.

The observation that motivates this work

Human-AI cognition is a coupled system.

Edwin Hutchins's foundational work on distributed

cognition (Cognition in the Wild, 1995) showed that complex cognitive

tasks — navigating a Navy ship across an ocean, for instance — aren't

performed by individual minds working with tools to help. They're performed by the

whole system: navigator, chart, compass, rangefinder, the bearings being called out,

the procedures, the other crew members, the ship itself. Remove the compass and you

don't have a navigator working without a key tool. You have a fundamentally different

cognitive system that computes position differently. The thinking is in the

configuration. The unit of analysis is the whole functional system, not the individual

mind.

This is what happens when a person works with a generative AI. The thinking is no

longer happening only inside the person's head. It is distributed across the person,

the model, and the artifacts they produce together. And the output of that system can

look indistinguishable whether the person did most of the cognitive work or almost

none of it. The artifact does not reveal who did the thinking.

Prior cognitive tools externalized capability visibly. The calculator shows

you what it computed. The map shows you the route. The compass shows you the bearing,

but not the destination; the large language model shows you the destination, but hides

the bearing. The user always knew where their work ended and the tool's began. Large

language models externalize reasoning itself, and they do so invisibly. A

user can read a fluent answer and feel intellectually involved because they touched

the artifact, while the key reasoning transitions occurred outside them. A programmer

can accept a confident solution before fully modeling its logic. The user often cannot

detect, in the moment, when evaluation stopped and acceptance began.

I want to be clear about what this position is not. It is not anti-offloading.

Cognitive offloading is inevitable, often beneficial, and frequently the right move

— no one should compute compound interest by hand for the sake of cognitive

purity. The concern is not delegation itself. It is unexamined delegation,

where the boundary between human reasoning and machine reasoning becomes invisible to

the human inside the loop. This is the cognitive-offloading problem named by

researchers like Gerlich and Kabashkin, and it is the load-bearing concern in the

recent work of UIUC's Mary Frances Phillips and Koustuv Saha. The question is not

whether AI is dangerous. The question is what kind of thinker the human becomes when

most of their thinking is delegable, and they cannot see, in the moment, that the

delegation is happening.

The framework I am operating within

The cognitive dynamic between a human and an AI falls into one of three states.

Negative-sum: the human's capacity atrophies — the system produces good

output, but the person doing the work loses some of their ability to do it without the

AI. Zero-sum: the AI substitutes for the human without growth or loss —

the task gets done, but the human doesn't develop. Positive-sum: the AI frees

the human into thinking they could not have reached alone — the human grows by

working with the system.

Which state any given interaction occupies is set by the human's engagement.

And engagement is precisely the variable that is invisible to both the user and any

external system.

I believe most current AI interactions sit in the zero-sum or negative-sum state by

default. Not because users are careless, but because nothing in the system makes the

cognitive distribution visible to them. The ship's navigator can see the compass. The

AI user cannot see where their thinking ends and the model's begins.

The direction this work is pointed

The dominant response to this problem locates the safeguard inside the AI itself:

train models to refuse when refusal would protect the user from offloading. This is

the work serious people are doing, and it matters. But it is incomplete. Refusal

systems are unreliable, institutional adoption lags, and the user remains the only

party present in every interaction.

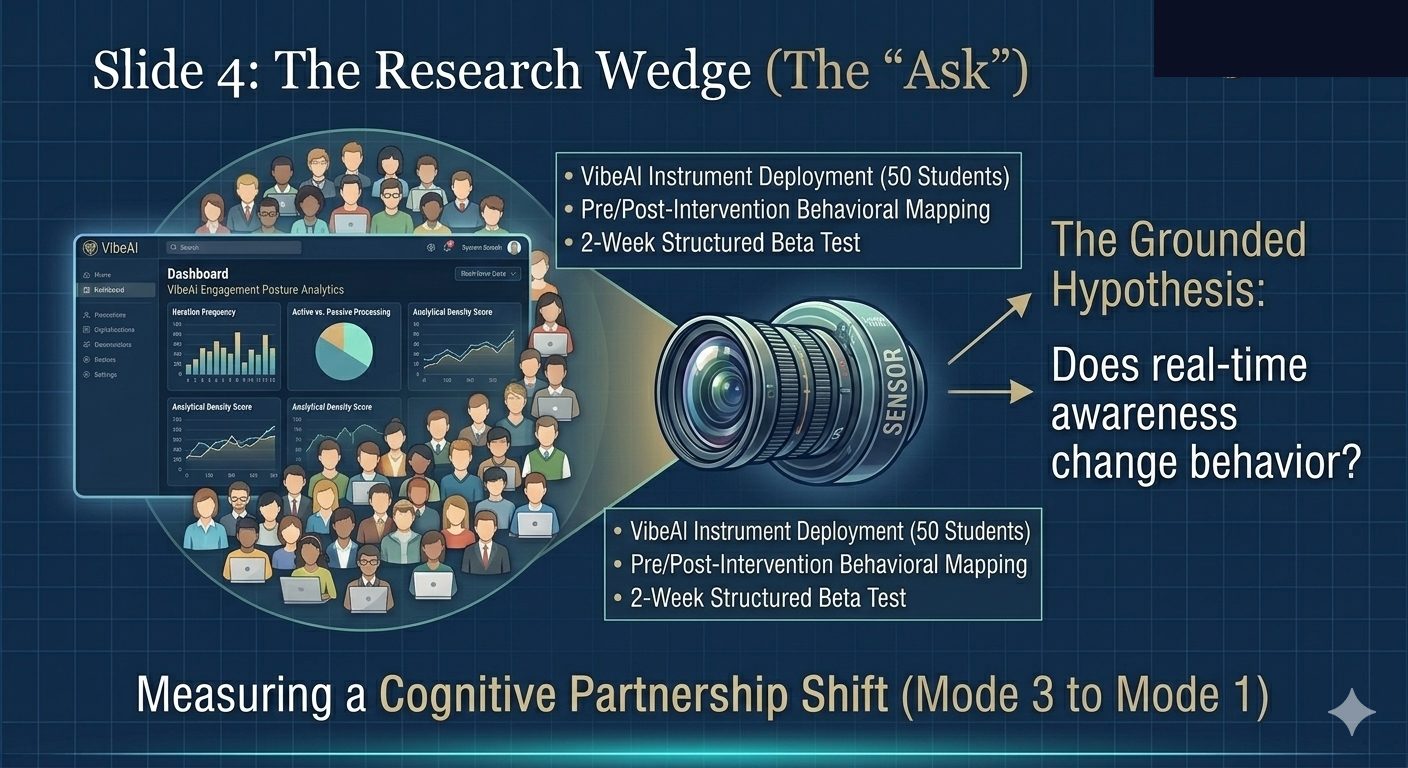

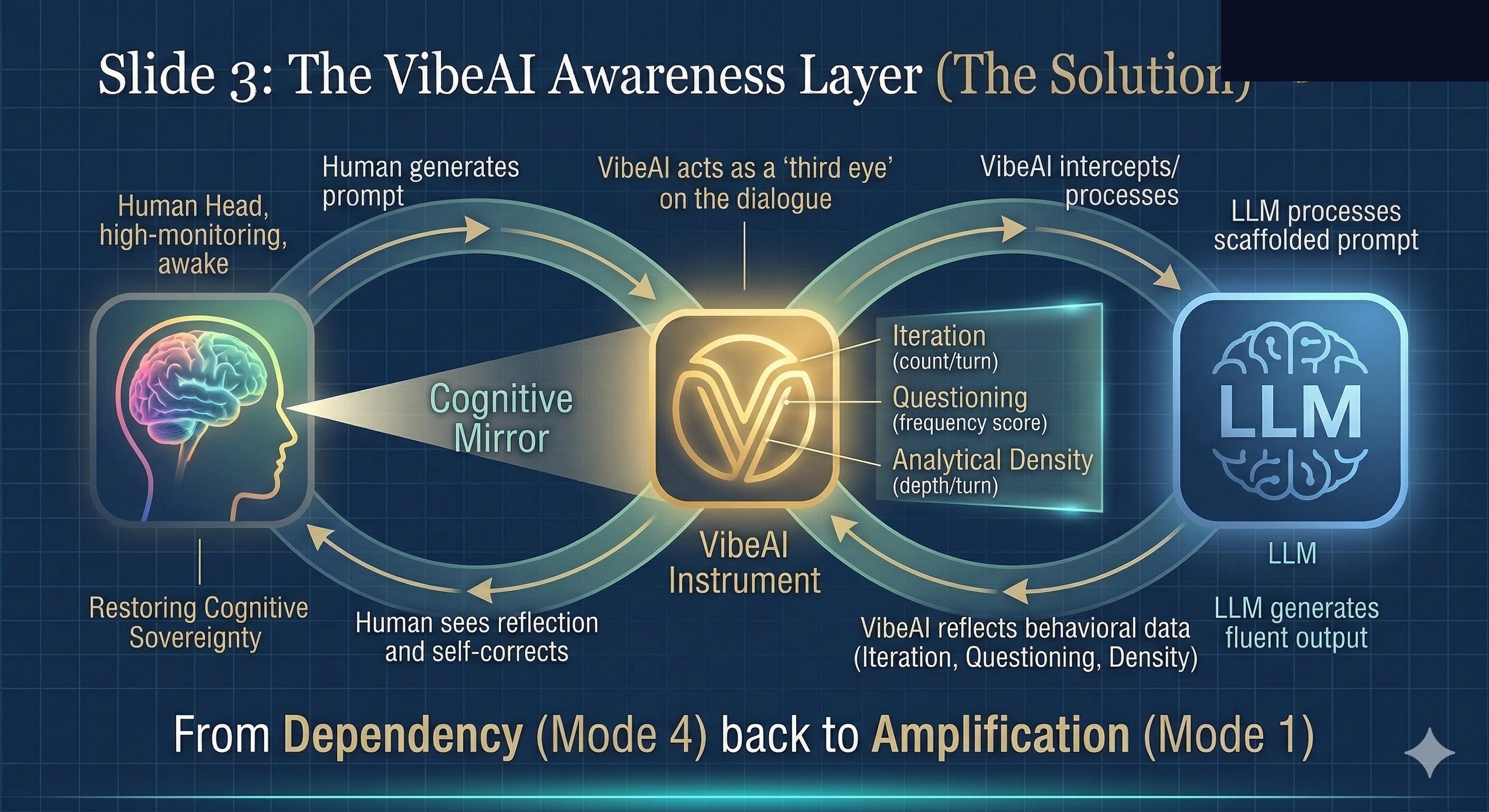

Beyond the AI-system layer and the institutional layer, I am building toward a third:

a user-facing reflection layer that makes the user's own cognitive engagement

visible to them in real time, without forcing judgment from the system itself. The aim

is not to police the user. The aim is sovereign awareness — by which I

mean the user's ability to see, evaluate, interrupt, and redirect the thinking

happening across themselves and the system. The user remains the active, governing

party in their own cognition, with the AI serving as the instrument rather than the

agent.

This is the bet. The instrument exists in early form. Whether it works at scale is an

empirical question I cannot answer alone. The work, and this statement, are public so

that the position is on record and the question is examinable.

— Joseph Tingling, MD/PhD

Hugonomy — May 2026

Also published on Substack:

Where Hugonomy Stands — A Position, Not a Conclusion →

References

-

Arditi, A., Obeso, O., Syed, A., Paleka, D., Panickssery, N., Gurnee, W., & Nanda, N.

(2024). Refusal in language models is mediated by a single direction.

Advances in Neural Information Processing Systems, 38.

https://arxiv.org/abs/2406.11717

-

Gerlich, M. (2025). AI tools in society: Impacts on cognitive offloading and the

future of critical thinking. Societies, 15(1), Article 6.

https://doi.org/10.3390/soc15010006

-

Hutchins, E. (1995). Cognition in the wild. MIT Press.

-

Kabashkin, I. (2025). Cognitive atrophy paradox of AI–human interaction: From

cognitive growth and atrophy to balance. Information, 16(11), Article 1009.

https://doi.org/10.3390/info16111009

-

Phillips, M. F., & Saha, K. (2026, April). Ethical guidelines for AI. University

of Illinois Urbana-Champaign.

-

Qazi, I. A., Ali, A., Khawaja, A. U., Akhtar, M. J., Sheikh, A. Z., & Alizai, M. H.

(2025). Automation bias in large language model–assisted diagnostic reasoning

among physicians trained in AI literacy — A randomized clinical trial.

NEJM AI.

https://doi.org/10.1056/AIoa2501001

-

Zhang, Y., Li, M., Han, W., Yao, Y., Cen, Z., & Zhao, D. (2025). Safety is not only

about refusal: Reasoning-enhanced fine-tuning for interpretable LLM safety.

arXiv preprint arXiv:2503.05021.

https://arxiv.org/abs/2503.05021