About

Built by a physician-scientist.

For people who still want to think.

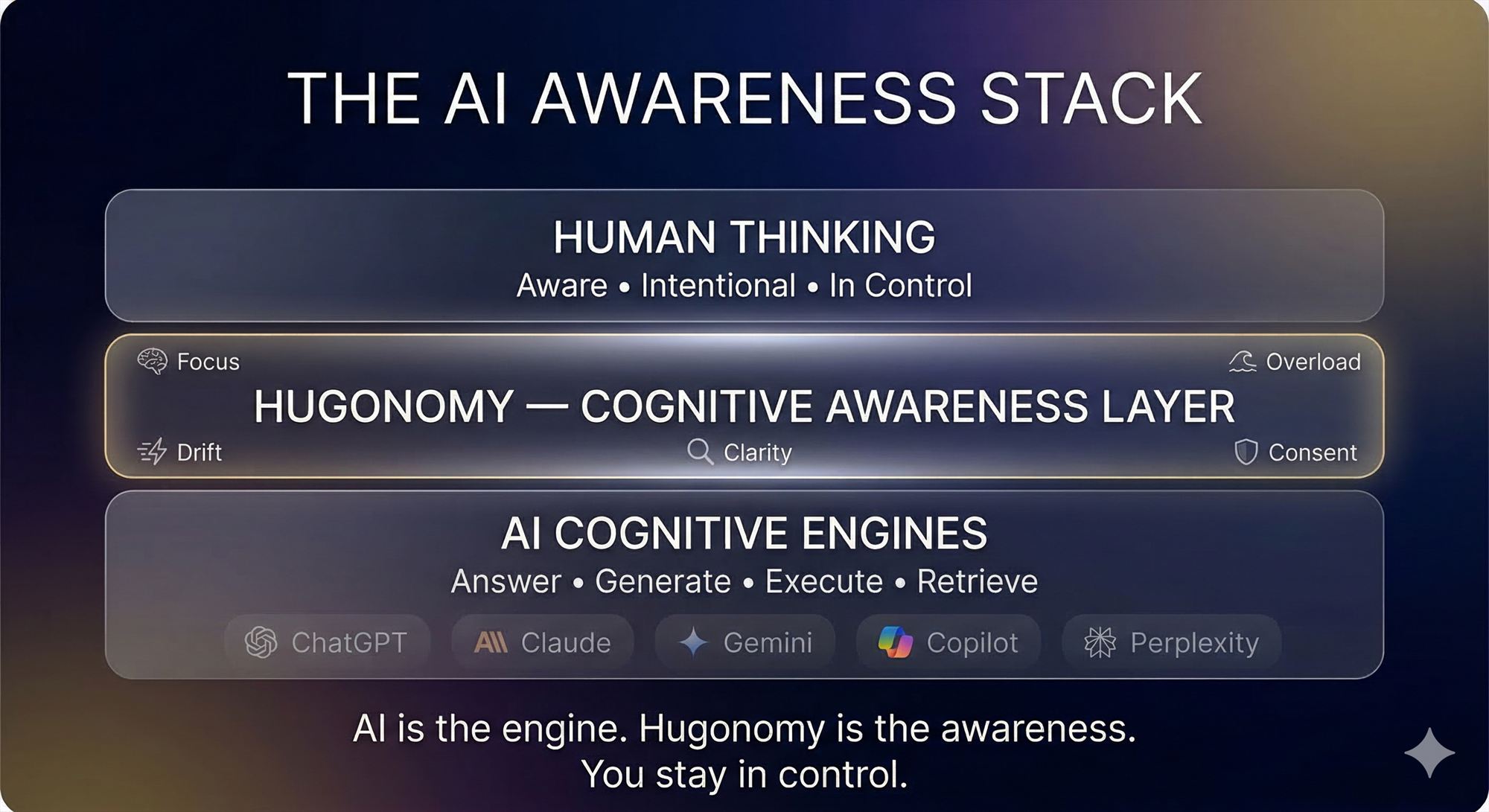

Hugonomy Systems is a bootstrapped company building tools that help people

stay aware while using AI. Founded in Urbana, Illinois.

↓ New to the terminology? See our plain-English glossary